In reviewing SEO opinion, most experts tend to think that time on site, also known as dwell time or average session duration, is probably a ranking factor. Intuitively, this metric makes sense as being a ranking factor as sites that keep their visitors longer are better sites, right? So we test this out to see if it is indeed a ranking factor or if it is not.

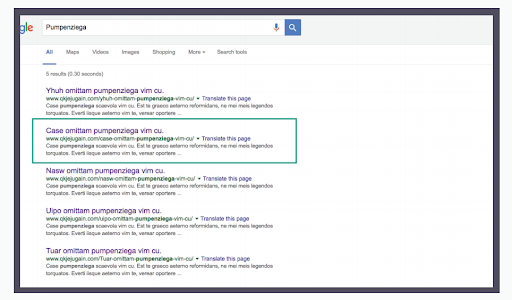

For this test, five identical test pages were created and indexed. The page in the #3 ranking position was chosen as the experiment page.

Five Mechanical Turk projects have been set up to create the traffic to these pages and to manipulate average session durations. Mechanical Turk is a platform where you can pay real workers to complete online tasks. While not specifically an SEO program such as Crowdsearch, Mechanical Turk seemed ideal for our purposes. Within the program, we could pay workers to click where we wanted them to click.

For the experiment page, users were instructed to go to the test page, find six words and fill them out in a form. By clicking a link on the experiment page, the goal is to have a higher average session duration on the page than the other four pages.

For the other test pages, users were instructed to go to the pages and fill out the first word on the page. It has been assumed that this would take a lot less time than finding six words.

We assume that some users will make mistakes and take longer or might be faster than expected. We also assume that any tool used to monitor traffic will be an imperfect measuring tool and may miss some of the data. However, if we have an experiment page that has a higher average session duration and other pages have a lower average session duration, we anticipate the page with a high average session duration will move up in rankings if average session duration is a ranking factor.

To hedge our bets, we also reached out for participation from members of the SIA to simply stay on the page for over a minute to ensure that our experiment page would get additional time on site.

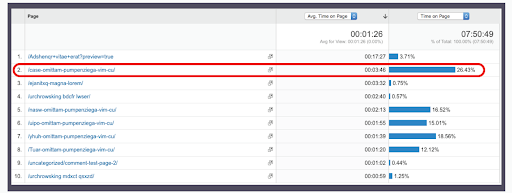

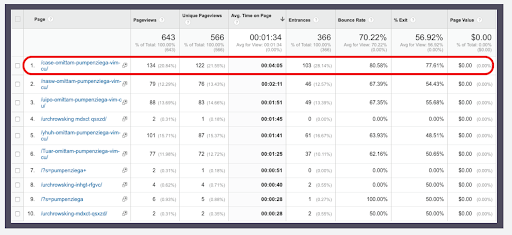

Google Analytics has been set up on the test pages to monitor the traffic and average session durations.

The experiment page received a bunch of great visits with excellent time on site and it was in the #2 position even though it had much higher average session time than the other pages.

While we obtained positive movement, it’s not conclusive that it was because of time on site alone, and could have been because there were more visits to the page than the other test pages.

It’s noteworthy that the page moved into the #1 and stayed there had a much better bounce rate than the experiment page, and the second most visits of all the pages in the test.

There are some obvious problems in the data collected in this test, but we think the information is still useful. In terms of application as it relates to user metrics, we would focus our efforts on bounce rate and total number of visits. Once we felt good about those metrics will we then consider the time on site.

In this video, Clint talks about this test and his insights on time on site and the core web vitals update.

This is test number 58 – User Metrics: Is time on site a ranking factor?

It’s June 21, 2021 right now and with the release of the core web vitals user experience update coming out, there’s been a lot of talk about PageSpeed, and time on site, and user interactions with your website, etc., all being a ranking factor. We were considering that and talking about that back in 2017, I think that’s when this test was done in 2017- 2018.

We had our time proving it and even back then, people were talking about how user experience is a ranking factor and bounce rate and was a ping ponging, which is, if you don’t know the differences, going to a search, going to the site, and then going back to the search, would trigger a negative user experience in Google and reduce your ranking. And if it was vice versa, you went to the site, and you never came back to the SERPs, that was a good thing.

What is going on now is the core web vitals is coming out and there’s essentially largest contentful paint, which is essentially what I would refer to as start render, where you can actually start seeing the website now and you can start interacting with the start render, and it kind of pops up.

And then there’s one more – the content layout shift is essentially if you have, let’s say, you have a responsive website and someone is on their phone and it loads up the website. And essentially, it’s sending the files for the desktop version but it’s also sending the information for the mobile version, and it will shift over a little bit to adjust for the screen size of the page and that’s perfectly normal to have. I work on a 27 inch monitor, you see this here. It’s a 27 inch monitor, some people work on a 13 inch monitor, some people work on tablets, smartphones, etc. So there’s all these different screen sizes and that’s why responsive technology is so awesome. But Google has decided that content layout shift is a bad thing and that you shouldn’t have it. At the end of the day, it just kind of something that I think with modern technology and modern web browsers, etc., that it’s expected as long as it’s not like crazy, like, you know what I mean? I’m going to play with this one here, look at it like this, if it bounces around and never shifts and never does stuff, this is worse for the user than it would be if it was responsive.

We are inundated with the people in all kinds of markets, internet marketing SEOs and agency SEOs that say that core web vitals is the new greatest and latest, and that we have to adapt to it, we have to apply to it because Google’s going to cancel your website and kick you out and etc, etc.

I don’t think that is as bad or is going to be as bad as what they say and there’s a couple of reasons why. One, I’ve been doing PageSpeed optimization. I was probably one of the first people that did PageSpeed optimization to sell it as a service. I figured out how to use w3 total cache. If you’ve never used that thing, it’s a really high end or technical, I won’t say high end but it’s a technical plugin that required you to do a lot of testing and most people who tried to implement it broke their sites and I was one of those most people. But I took the time to learn it and after a few hours and few days of messing around with it, and busting the site and fixing it, and busting the site and fixing it, I got the understanding and the ability to make sites really fast with it.

Typically, I can beat plugins like WP Rocket and Swift Performance etc, with w3 total cache. I don’t use it a lot on clients websites because clients, on occasion, like to go in there and mess with things and if you’re using w3 total cache, you push the wrong check button, you can take that in your website, if you don’t know how to fix that, go back and repair those things, especially if you haven’t checked a whole bunch of things, that’s bad, versus those other plugins, you can just kind of shut them on and off, and you’ll be alright.

I’ve been doing PageSpeed stuff foreve and I can tell you that PageSpeed optimization will get you ranking boosts, and PageSpeed optimization won’t get you ranking boost and what I mean by that is, if you have a website that is loading 8t to 10 seconds. Let’s say it’s really heavy, it’s got a lot of images, got videos and stuff, and it takes 8 to 10 seconds for it to come up, not completely, just the start and actually be able to get interactive with it, you’re going to have problems, and you were going to have problems back in 2017 ,2018, you’re going to have problems in 2021.

Users just don’t have the patience for that in a lot of cases. And then on the flip side of that, if your page is loading in three seconds, and your competition’s pages are loading in two seconds, PageSpeed optimization is not going to get you all that much, you’re not going to get an improvement on rankings for it, you’re not going to get anything fixed because of it. So just keep that in mind when you’re thinking about user metrics.

In order for Google to know entirely what your user metrics are, they have to have access to it. In order to have access to those kinds of things, they’re going to get two different reports, essentially. So they have Chrome and then they have Google Analytics. Essentially, if your users are using Chrome, and they have the right setups turned on, etc. all that user data will get sent back to Google. It’s not entirely all like PageSpeed and bounce rate and all that stuff. But there are some things that Google gets back.

The same with Google Analytics, you send that to them, and they’re going to be able to apply and use that and that’s why we did a test if Google Analytics was on your site, would you get a ranking boost from it and pages that didn’t have it were outranked by pages that had Google Analytics, it’s not a significant thing. Don’t go over there and jump in and oh, if I add Google Analytics, I’m going to rank. It’s not the case. It’s just that pages that didn’t have it were outranked by pages that did.

So how is Google, and this is a question probably to everybody, is how is Google supposed to be able to analyze 2 billion websites? See the bounce rate, user experience, scroll, click through rate, all that stuff, and then compile all this data and then use that as a sorting factor?

And I’ll tell you, I don’t think they can. I think that the page experience is going to be very insignificant when compared to everything else. I think it will have more effect on people who rely almost entirely on mobile traffic, than it will people who rely almost entirely on desktop traffic. And I don’t think that it’s going to be as big as a deal as everyone’s made it out to be.

In particular, as a case in point, if you remember, HTTPS, that was a big deal, you got to do it, you’re going to fall out of the index. So everyone and their brother went out and put HTTPS on their websites. And then today, it doesn’t matter. It’s not even a ranking factor. So Google gave us a little mini ranking factor to get everyone to do it, and then took the ranking factor away, just so we can all dance like dancing monkeys for Google. And I think the same thing is going on with core web vitals. It’s just another program that they want to do to speed up the web, so to get everyone to do it a little bit better under the guise that users want your web faster and obviously, we all want decent speed in web pages, but we’ve all been alive long enough in the internet age to know that if you have a 3g connection, a 4g connection is going to be faster. If you have a 4g connection, a DSL connection is going to be faster, etc.

This is not really about users. It’s about the ability of Google to process your websites faster so that they can crawl more of the website or the web better, so they can gather more data and information, so they can correlate that, create indexes and supplemental indexes, and then rank and sort based off of the information that they can actually get to. And they can’t get to a lot of this stuff, not in a meaningful way in order to apply it effectively for ranking purposes.

And that’s what this test showed us, too. We had time on site, we increased time on site, and we used the Mechanical Turk to do it and the result was, it was kind of a wash. So the test page went up a little bit but it just got one of the things that could have been just more traffic, there was more clicks from search, or something that Google can measure, right? It got more traffic from the search results and ergo, it ranked higher, which would make this almost a CTR test versus another test, right?

So something to keep in mind. I know we discussed a lot. But the fact is that back then, time on site wasn’t a ranking factor and even after core web vitals comes out, I doubt time on site is going to be a ranking factor either. For all the reasons I said before, but in particular, it’s just there’s just no way for them to measure 2 billion plus websites, I’m sure there’s way more than that, 2 billion plus websites and all the analytics data that goes along with it and correlating it and sort it. With the computing power and the money that would take to do that alone, it just makes that not feasible. So there’s some common sense you have to apply there when you listen to some of these people in the expression.

So if your website is horrible, website is slow as hell, fix it, you’re going to see your ranking improvement. If your website’s decent, fix it just to make your users happy. If you’re loading in three seconds or faster on a mobile connection, it’s not even full load that they’re worried about being able to actually use the website. Three seconds or faster then you’re going to be just fine.